Neural networks are a key component of artificial intelligence (AI) and machine learning (ML) algorithms, inspired by the functioning of the human brain. They have revolutionized various fields, including computer vision, natural language processing, and pattern recognition. Neural networks consist of interconnected nodes, known as neurons, organized in layers. These networks can learn from data, recognize patterns, and make predictions or decisions based on the learned information.

At the core of a neural network are neurons, which receive input signals, process them, and generate an output. Each neuron applies a mathematical operation to the input, often a weighted sum followed by an activation function, which introduces non-linearity. The weights associated with each input determine the neuron’s sensitivity to specific features or patterns in the data.

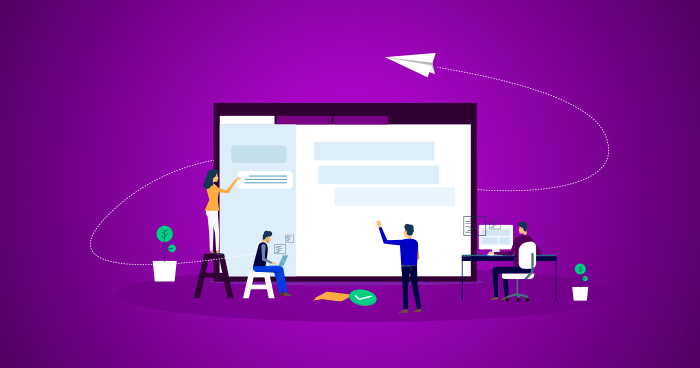

Neural networks typically consist of an input layer, one or more hidden layers, and an output layer. The hidden layers perform complex computations and extract relevant features from the input data, while the output layer provides the final result or prediction. During training, neural networks adjust their internal weights using optimization algorithms, such as backpropagation, to minimize the difference between their predictions and the desired outputs.

One of the main advantages of neural networks is their ability to learn and generalize from large amounts of data. They can capture intricate relationships and make accurate predictions even in complex, high-dimensional datasets. Convolutional neural networks (CNNs) excel in computer vision tasks, such as image recognition, by leveraging spatial hierarchies in the data. Recurrent neural networks (RNNs), on the other hand, are well-suited for sequential data analysis, such as speech recognition or language modeling, as they can process inputs with temporal dependencies.

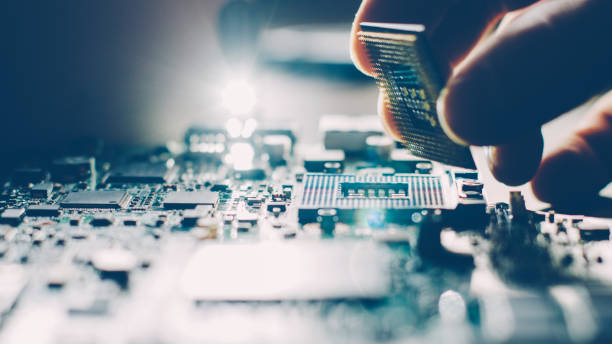

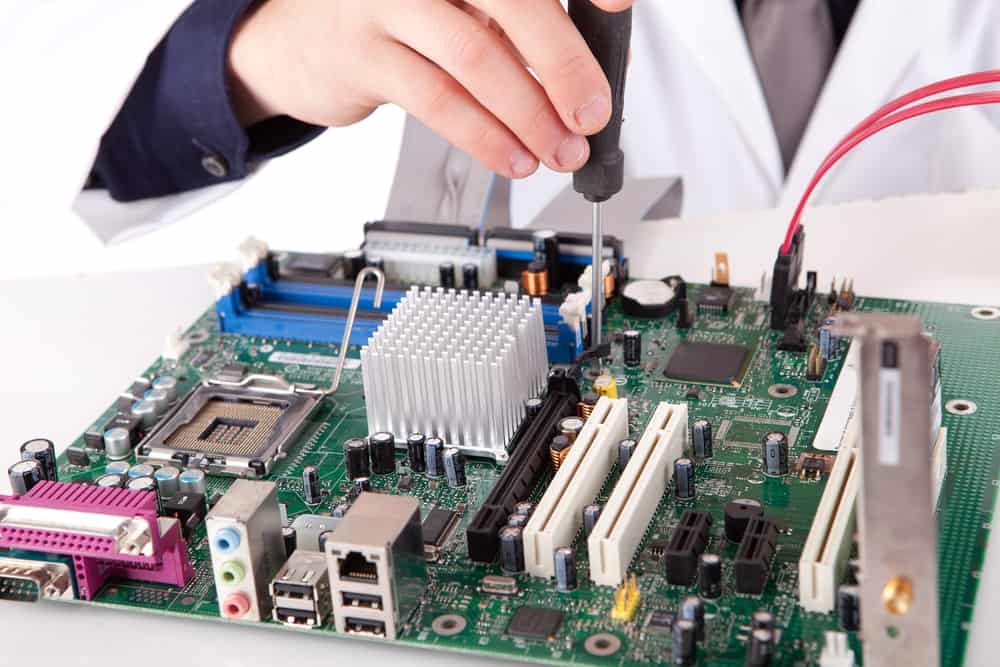

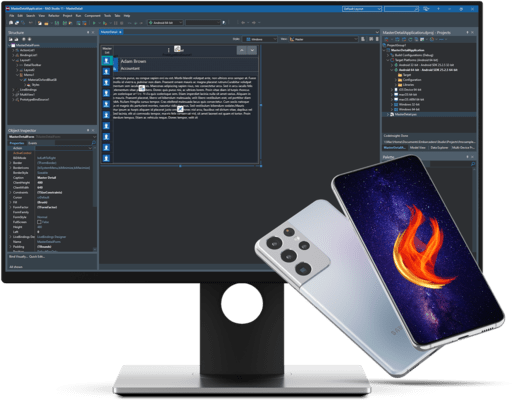

The widespread adoption of neural networks has been fueled by advancements in hardware capabilities, such as graphical processing units (GPUs) and specialized neural processing units (NPUs), which accelerate the training and inference processes. Additionally, open-source libraries like TensorFlow and PyTorch have made it easier for researchers and developers to build and deploy neural network models.

As neural networks continue to evolve, researchers are exploring novel architectures, such as generative adversarial networks (GANs) for generating realistic data, and transformers for natural language processing tasks. These advancements are pushing the boundaries of what neural networks can achieve, opening up new possibilities for AI and ML applications in various industries.

0