Usenet servers have historically operated under a philosophy of minimal intervention, allowing users to freely exchange information and engage in discussions without centralized oversight or content moderation. However, as online communities have grown and diversified, the issue of content moderation on usenet servers has become increasingly complex, raising questions about the balance between free speech and the need for regulation.

Challenges of Content Moderation:

One of the primary challenges of content moderation on Usenet servers is the sheer volume and diversity of content exchanged within the network. Usenet hosts discussions on a wide range of topics, from academic research and technical support to entertainment and hobbies. As a result, identifying and moderating inappropriate or harmful content can be a daunting task, requiring significant resources and expertise.

Moreover, Usenet’s decentralized architecture presents logistical challenges for content moderation. Unlike centralized social media platforms, Usenet servers are independently operated and interconnected, making it difficult to implement uniform content moderation policies across the entire network. Each server may have its own rules and guidelines, further complicating efforts to enforce consistent moderation standards.

Balancing Free Speech and Regulation:

The issue of content moderation on Usenet servers raises fundamental questions about the balance between free speech and the need to address harmful or illegal content. Advocates of free speech argue that Usenet’s open and decentralized nature fosters diverse perspectives and encourages uninhibited discourse, essential for a healthy democracy and intellectual exchange.

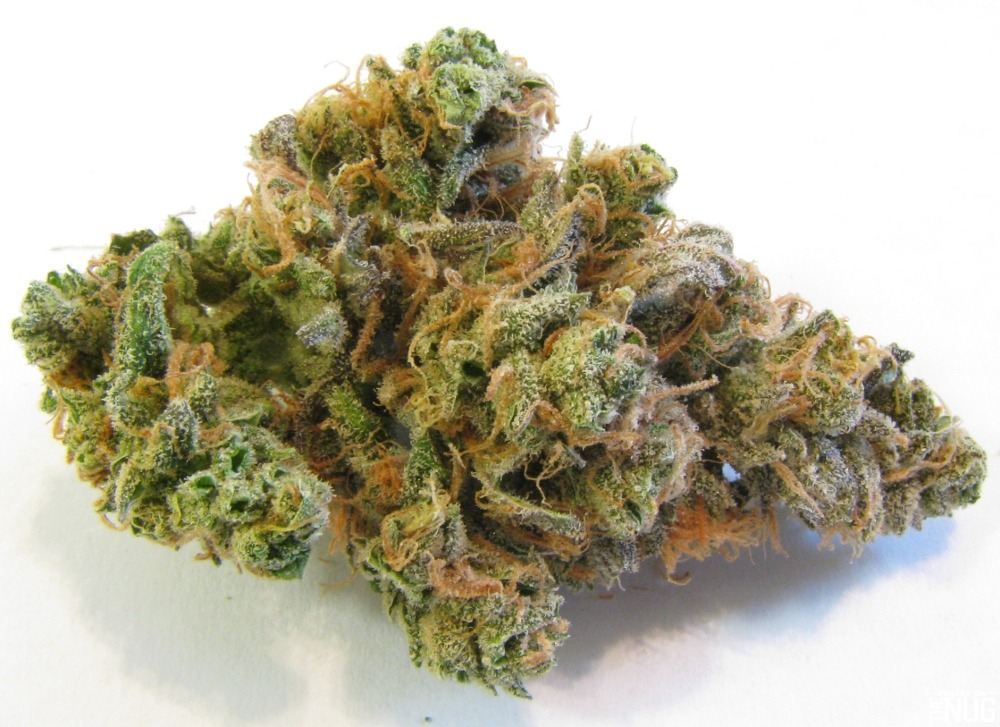

However, critics point to the proliferation of harmful content on Usenet, including spam, hate speech, and illegal material such as child pornography and pirated content. They argue that unregulated access to such content poses risks to users’ safety and well-being, necessitating intervention to mitigate harm and uphold community standards.

Approaches to Content Moderation:

Usenet server operators employ various approaches to content moderation, ranging from hands-off policies to proactive filtering and enforcement of community guidelines. Some servers rely on user-driven moderation systems, empowering community members to report and flag inappropriate content for review. Others employ automated filtering algorithms or manual moderation teams to monitor and remove objectionable material.

Finding the right balance between free speech and regulation is a ongoing challenge for Usenet server operators. Striking a balance requires careful consideration of factors such as community norms, legal obligations, and technological capabilities. Ultimately, effective content moderation on Usenet servers requires collaboration among stakeholders, including server operators, users, and regulatory authorities, to develop and implement policies that promote responsible online behavior while respecting the principles of free speech and open discourse.

0